|

This makes sense to me and could indicate that the way the AKS servers divy up the workload (see next section) could be based on the size of the instances. Zimmergren over on GitHub indicates that he has less issues with larger instances than he did running bare bones smaller nodes. This means we can probably create an Alarm off of this behavior (and I have a issue in asking about this on Azure DevOps side: ) Node Size Potentially Impacts Issue Frequency Seems like it wasn't talking to the VM / Node in addition to not responding to our requests.Īs soon as we were back (scaled the # nodes up by one, and back down - see answers for workaround) the Metrics (CPU etc) went back to normal - and we could connect from Kubectl. Notice the 'Dip' in CPU and Network? That's where the Net/http: TLS issue impacted us - and when the AKS Server was un-reachable from Kubectl. To the above point, here are the metrics the same Node after Scaling up and then back down (which happened to alleviate our issue, but does not always work - see answers at bottom): Metrics After Issue Mitigation (Added Postmortem) The overall Node / VM utilization is generally flat prior to this chart for the previous 30 days with a few bumps relating to production site traffic / update pushes etc. The drop in utilization and network io correlates strongly with both the increase in disk utilization AND the time period we began experiencing the issue.

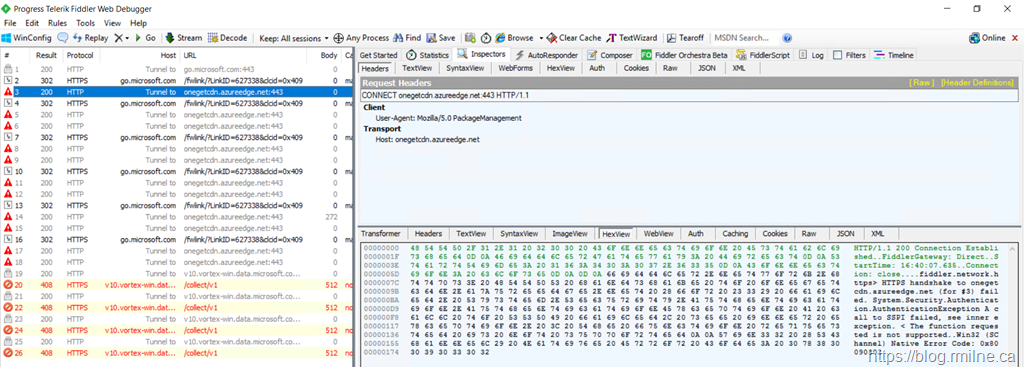

The node(s) on my impacted cluster look like this: The first piece I haven't seen mentioned elsewhere is Resource usage on the nodes / vms / instances that are being impacted by the above Kubectl 'Unable to connect to the server: net/http: TLS handshake timeout' issue. I am posting this as I have a few new tidbits that I haven't seen elsewhere and I am wondering if anyone has ideas as far as other potential options for working around the issue. Previously there was an announcements document regarding the problem but no such status updates are currently available even though the problem continues to present itself: Many of the above GitHub issues have been closed as resolved but the issue persists. You can also try scaling your Cluster (assuming that doesn't break your app). At least please grant the ability to let AKS preview customers, regardless of support tier, upgrade their support request severity for THIS specific issue. Azure Kubernetes: TLS handshake timeout (this one has some Microsoft feedback)Īnd multiple GitHub issues posted to the AKS repo:Ĭurrent best solution is post a help ticket - and wait - or re-create your AKS cluster (maybe more than once, cross your fingers, see below.) but there should be something better.Can't contact our Azure-AKS kube - TLS handshake timeout.Managed Azure Kubernetes connection error.There have obviously been 'a few' other question about this issue: If that directory does not exist yet, enter the desired directory name and check the Create directory box.My question (to MS and anyone else) is: Why is this issue occurring and what work around can be implemented by the users / customers themselves as opposed to by Microsoft Support? Destination: select an existing, empty directory within the jail to link to the Source storage area.This is why it is recommended to create a separate dataset to store jails, so the dataset holding the jails is always separate from any datasets used for storage on the FreeNAS® system. This directory must reside outside of the volume or dataset being used by the jail.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed